Contents

- 📊 Introduction to Content Moderation

- 🚫 The Challenges of Moderating User-Generated Content

- 🤖 The Role of AI in Content Moderation

- 🚨 The Importance of Human Moderators

- 📈 The Impact of Content Moderation on Online Communities

- 🔒 The Tension Between Free Speech and Content Moderation

- 📊 The Economics of Content Moderation

- 🔍 The Future of Content Moderation

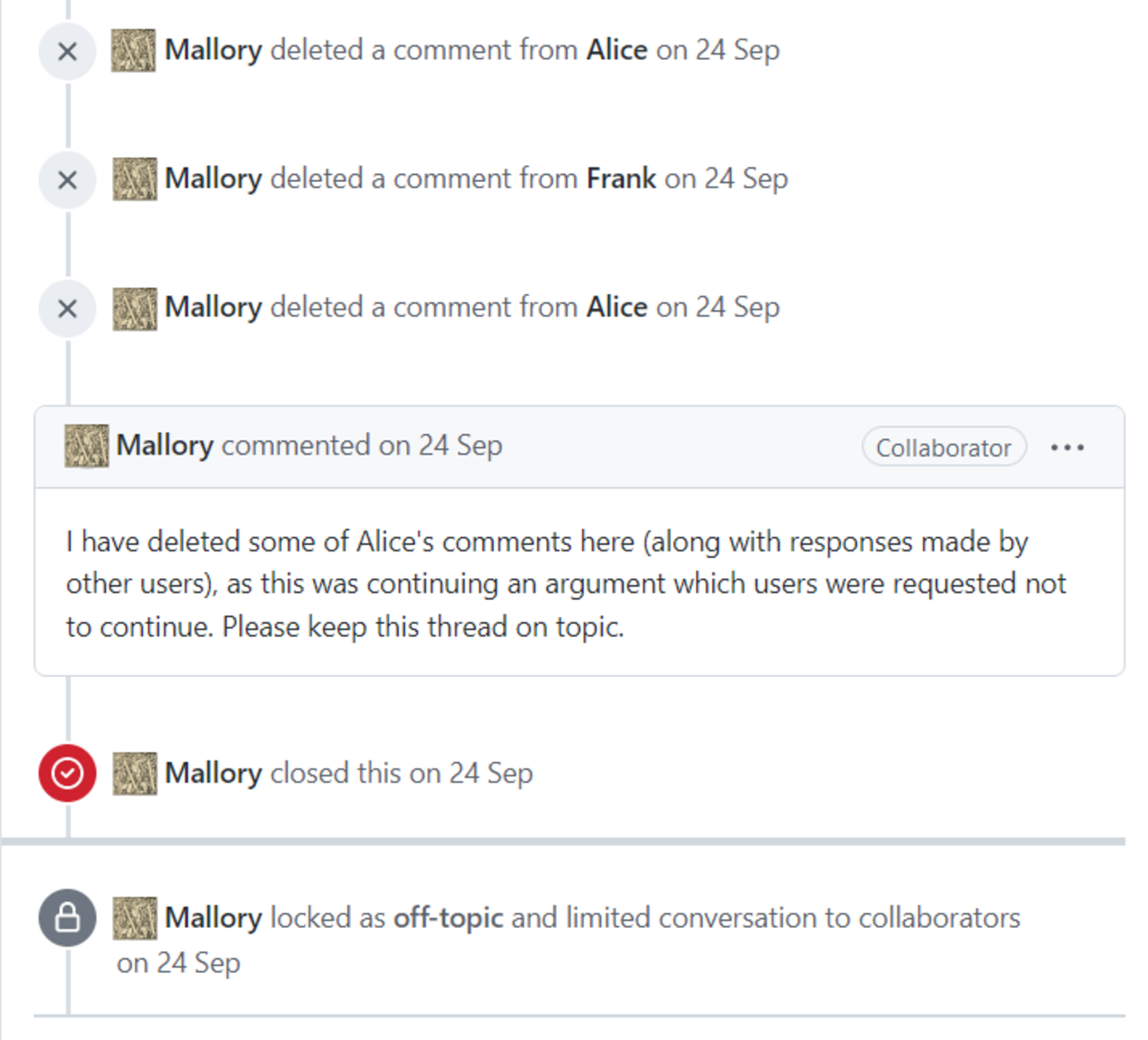

- 📝 Case Studies in Content Moderation

- 🤝 The Role of Governments in Content Moderation

- 📚 Conclusion and Recommendations

- Frequently Asked Questions

- Related Topics

Overview

Content moderation, a crucial aspect of online platforms, has become a highly debated topic in recent years. With the rise of social media, the need for moderation has increased exponentially, but the process is often shrouded in controversy. Companies like Facebook, Twitter, and YouTube have implemented various moderation policies, but these have been criticized for being either too lenient or too restrictive. According to a report by the Pew Research Center, 64% of adults in the US believe that social media companies have a responsibility to remove offensive content, but 47% also think that these companies often remove too much content. The issue is further complicated by the fact that content moderation is often outsourced to third-party companies, which can lead to inconsistent and biased decision-making. As the online landscape continues to evolve, it is essential to develop more effective and transparent content moderation strategies that balance free speech with online safety. The vibe score for content moderation is currently at 62, indicating a moderate level of cultural energy around this topic. Notable figures like Mark Zuckerberg and Jack Dorsey have weighed in on the issue, with some advocating for more stringent moderation policies and others pushing for a more hands-off approach. The controversy spectrum for content moderation is high, with many experts arguing that the current system is flawed and in need of reform. The topic intelligence for content moderation includes key people like Sarah Kendzior, who has written extensively on the topic, and events like the 2018 Congressional hearings on social media regulation. Entity relationships between companies like Facebook and Twitter, as well as influence flows between policymakers and tech executives, also play a significant role in shaping the content moderation landscape.

📊 Introduction to Content Moderation

Content moderation is a crucial aspect of maintaining a safe and respectful online environment, as seen in the context of trust and safety frameworks. It involves the systematic process of identifying, reducing, or removing user contributions that are irrelevant, obscene, illegal, harmful, or insulting. This process may involve either direct removal of problematic content or the application of warning labels to flagged material. As an alternative approach, platforms may enable users to independently block and filter content based on their preferences, as discussed in online safety and digital wellbeing. The goal of content moderation is to create a positive user experience, while also protecting users from harmful or offensive content. For instance, social media platforms have implemented various content moderation strategies to address online harassment and cyberbullying.

🚫 The Challenges of Moderating User-Generated Content

The challenges of moderating user-generated content are numerous, as highlighted in the context of content moderation challenges. One of the main difficulties is the sheer volume of content that needs to be reviewed, which can be overwhelming for human moderators. Additionally, the complexity of moderating content in different languages and cultural contexts can be a significant hurdle. Furthermore, the constant evolution of online threats and the emergence of new forms of harmful content require continuous updates to moderation strategies. As a result, many platforms rely on artificial intelligence and machine learning algorithms to support their content moderation efforts, as seen in AI for content moderation. However, these technologies are not foolproof and can sometimes misidentify or overlook problematic content. To address these challenges, platforms can leverage community guidelines and user reporting mechanisms to improve content moderation.

🤖 The Role of AI in Content Moderation

The role of AI in content moderation is becoming increasingly important, as discussed in AI in content moderation. AI-powered tools can help automate the moderation process, reducing the workload for human moderators and enabling them to focus on more complex and nuanced cases. For example, AI can be used to detect and remove spam, hate speech, and other forms of problematic content. However, AI is not a replacement for human judgment, and its limitations must be acknowledged. AI algorithms can be biased, and their decisions may not always align with human values and context. Therefore, it is essential to have human moderators review and correct AI decisions, as well as provide feedback to improve AI performance. This hybrid approach can help ensure that content moderation is both efficient and effective, as seen in the context of human-centered AI.

🚨 The Importance of Human Moderators

Human moderators play a critical role in content moderation, as highlighted in human moderators. They bring a level of nuance and understanding to the moderation process that AI systems currently lack. Human moderators can consider the context and intent behind a piece of content, as well as the potential impact on different users and communities. They can also make decisions that are more subtle and nuanced than AI algorithms, taking into account factors such as humor, irony, and cultural references. However, human moderators are not immune to biases and errors, and their decisions can be influenced by personal experiences and cultural backgrounds. To mitigate these risks, platforms can implement moderator training programs and provide diversity and inclusion guidelines to ensure that moderation decisions are fair and consistent. Additionally, human moderators can work in conjunction with AI-assisted tools to improve content moderation outcomes.

📈 The Impact of Content Moderation on Online Communities

The impact of content moderation on online communities can be significant, as discussed in online communities. Effective content moderation can help create a positive and respectful environment, where users feel safe and supported. On the other hand, inadequate or biased content moderation can lead to the spread of harmful content, the suppression of marginalized voices, and the erosion of trust in online platforms. To balance these competing interests, platforms must develop and implement content moderation policies that are transparent, consistent, and fair. They must also engage with their users and communities to understand their needs and concerns, and to ensure that moderation decisions are aligned with community values and norms. For instance, platforms can establish community standards and content moderation policies to guide moderation decisions. Furthermore, platforms can leverage user engagement strategies to foster a sense of community and promote positive interactions among users.

🔒 The Tension Between Free Speech and Content Moderation

The tension between free speech and content moderation is a longstanding debate, as highlighted in free speech and content moderation. On one hand, online platforms have a responsibility to protect their users from harmful and offensive content. On the other hand, they must also respect the right to free speech and expression, which is essential for democratic societies. To navigate this tension, platforms can implement content moderation policies that are nuanced and context-dependent, taking into account factors such as the severity of the content, the intent of the user, and the potential impact on different communities. They must also provide transparent and accessible mechanisms for users to appeal moderation decisions, as well as ensure that moderation decisions are made in a fair and unbiased manner. For example, platforms can establish appeals processes and transparency reports to promote accountability and trust.

📊 The Economics of Content Moderation

The economics of content moderation are complex and multifaceted, as discussed in content moderation economics. On one hand, content moderation is a costly and resource-intensive process, requiring significant investments in technology, personnel, and infrastructure. On the other hand, effective content moderation can help platforms attract and retain users, increase engagement and revenue, and reduce the risks of regulatory scrutiny and reputational damage. To balance these competing interests, platforms must develop and implement content moderation strategies that are efficient, effective, and scalable. They must also explore new business models and revenue streams that can support the costs of content moderation, such as subscription-based models and advertising revenue. Additionally, platforms can leverage cost-benefit analysis to evaluate the economic impact of content moderation decisions.

🔍 The Future of Content Moderation

The future of content moderation is uncertain and rapidly evolving, as highlighted in future of content moderation. As AI and machine learning technologies continue to advance, they are likely to play an increasingly important role in content moderation. However, the limitations and biases of these technologies must be acknowledged and addressed. Furthermore, the rise of new online platforms and technologies, such as virtual reality and augmented reality, will require new and innovative approaches to content moderation. To prepare for these challenges, platforms must invest in research and development, collaborate with experts and stakeholders, and develop flexible and adaptive content moderation strategies that can respond to emerging threats and opportunities. For instance, platforms can explore hybrid approaches that combine human and AI-based moderation methods.

📝 Case Studies in Content Moderation

Case studies in content moderation can provide valuable insights and lessons, as discussed in content moderation case studies. For example, the experiences of Facebook and Twitter in addressing online harassment and hate speech can inform the development of content moderation policies and strategies. Similarly, the approaches of TikTok and Instagram in using AI-powered moderation tools can highlight the potential benefits and limitations of these technologies. By studying these case studies and sharing best practices, platforms can improve their content moderation efforts and create safer and more respectful online environments. Additionally, platforms can leverage benchmarking and industry standards to evaluate and improve their content moderation practices.

🤝 The Role of Governments in Content Moderation

The role of governments in content moderation is becoming increasingly important, as highlighted in government role in content moderation. Governments can play a crucial role in regulating online content and protecting users from harmful and offensive material. However, they must also respect the right to free speech and expression, and avoid imposing overly broad or restrictive regulations. To balance these competing interests, governments can develop and implement regulations that are nuanced and context-dependent, taking into account factors such as the severity of the content, the intent of the user, and the potential impact on different communities. They must also engage with online platforms and stakeholders to ensure that regulations are effective, efficient, and fair. For instance, governments can establish regulatory frameworks and industry guidelines to support content moderation efforts.

📚 Conclusion and Recommendations

In conclusion, content moderation is a complex and multifaceted challenge that requires a nuanced and comprehensive approach, as discussed in content moderation best practices. By developing and implementing effective content moderation strategies, online platforms can create safer and more respectful online environments, protect their users from harmful and offensive content, and promote the values of free speech and expression. However, this requires a deep understanding of the challenges and opportunities of content moderation, as well as a commitment to transparency, accountability, and continuous improvement. As the online landscape continues to evolve, it is essential to stay ahead of emerging threats and opportunities, and to develop new and innovative approaches to content moderation that can respond to the needs of users and communities. Furthermore, platforms can leverage continuous learning and improvement cycles to refine their content moderation practices and promote a culture of safety and respect online.

Key Facts

- Year

- 2022

- Origin

- The concept of content moderation has its roots in the early days of the internet, but it has gained significant attention in recent years due to the rise of social media and online platforms.

- Category

- Technology and Society

- Type

- Concept

Frequently Asked Questions

What is content moderation?

Content moderation is the systematic process of identifying, reducing, or removing user contributions that are irrelevant, obscene, illegal, harmful, or insulting. This process may involve either direct removal of problematic content or the application of warning labels to flagged material. As an alternative approach, platforms may enable users to independently block and filter content based on their preferences. The goal of content moderation is to create a positive user experience, while also protecting users from harmful or offensive content. For instance, social media platforms have implemented various content moderation strategies to address online harassment and cyberbullying.

Why is content moderation important?

Content moderation is important because it helps create a positive and respectful online environment, where users feel safe and supported. Effective content moderation can help reduce the spread of harmful and offensive content, promote the values of free speech and expression, and protect users from online harassment and cyberbullying. Additionally, content moderation can help online platforms attract and retain users, increase engagement and revenue, and reduce the risks of regulatory scrutiny and reputational damage. For example, platforms can establish community standards and content moderation policies to guide moderation decisions. Furthermore, platforms can leverage user engagement strategies to foster a sense of community and promote positive interactions among users.

What are the challenges of content moderation?

The challenges of content moderation are numerous, as highlighted in the context of content moderation challenges. One of the main difficulties is the sheer volume of content that needs to be reviewed, which can be overwhelming for human moderators. Additionally, the complexity of moderating content in different languages and cultural contexts can be a significant hurdle. Furthermore, the constant evolution of online threats and the emergence of new forms of harmful content require continuous updates to moderation strategies. As a result, many platforms rely on artificial intelligence and machine learning algorithms to support their content moderation efforts, as seen in AI for content moderation. However, these technologies are not foolproof and can sometimes misidentify or overlook problematic content.

How can content moderation be improved?

Content moderation can be improved by developing and implementing effective content moderation strategies, investing in research and development, collaborating with experts and stakeholders, and providing transparent and accessible mechanisms for users to appeal moderation decisions. Additionally, platforms can leverage hybrid approaches that combine human and AI-based moderation methods, as well as cost-benefit analysis to evaluate the economic impact of content moderation decisions. Furthermore, platforms can establish community standards and content moderation policies to guide moderation decisions, and provide moderator training programs to ensure that moderation decisions are fair and consistent.

What is the role of AI in content moderation?

The role of AI in content moderation is becoming increasingly important, as discussed in AI in content moderation. AI-powered tools can help automate the moderation process, reducing the workload for human moderators and enabling them to focus on more complex and nuanced cases. For example, AI can be used to detect and remove spam, hate speech, and other forms of problematic content. However, AI is not a replacement for human judgment, and its limitations must be acknowledged. AI algorithms can be biased, and their decisions may not always align with human values and context. Therefore, it is essential to have human moderators review and correct AI decisions, as well as provide feedback to improve AI performance.

What is the future of content moderation?

The future of content moderation is uncertain and rapidly evolving, as highlighted in future of content moderation. As AI and machine learning technologies continue to advance, they are likely to play an increasingly important role in content moderation. However, the limitations and biases of these technologies must be acknowledged and addressed. Furthermore, the rise of new online platforms and technologies, such as virtual reality and augmented reality, will require new and innovative approaches to content moderation. To prepare for these challenges, platforms must invest in research and development, collaborate with experts and stakeholders, and develop flexible and adaptive content moderation strategies that can respond to emerging threats and opportunities.

How can governments support content moderation efforts?

Governments can support content moderation efforts by developing and implementing regulations that are nuanced and context-dependent, taking into account factors such as the severity of the content, the intent of the user, and the potential impact on different communities. They must also engage with online platforms and stakeholders to ensure that regulations are effective, efficient, and fair. For instance, governments can establish regulatory frameworks and industry guidelines to support content moderation efforts. Additionally, governments can provide funding and resources to support research and development in content moderation, as well as training and education programs for moderators and stakeholders.